Log Shipping is SQL Server technology that is available in both the Standard and Enterprise editions. The technology may be useful in solving the following problems:

- Creation of read-only servers to perform heavy SQL queries and reduce the load on the primary server

- Creating backup servers that can be quickly put into operation in the case of a primary server failure

- Creating a secondary (slave) server, where data is updated with a fixed delay

What is Log Shipping?

Log shipping is a process that allows the user to automatically synchronize a primary database with databases located on other servers (secondary databases). This process involves creation of a transaction log backup on the primary server, as well as delivery and restoration of the backup on a secondary server. Due to its speed, simple setup and support, log shipping can be a great alternative to replication or mirroring. However, unlike replication, databases on secondary servers will not be writable, and their data relevance will also lag behind for a fixed time interval (which depends on the frequency of log shipping).

When Can it be Useful?

Log shipping is a tool, and like any other tool, it can be used for a variety of tasks. It can be useful in solving a number of problems, such as:

- Reducing load on the main server by creating a secondary server that processes read-only requests, such as queries for graphs and reports. In addition, you can use a secondary database as a target for ETL

- Creating backup (i.e. standby) servers that can be quickly put into operation in case of primary server failure

- Creating a secondary (slave) server, where data is updated with a fixed delay

How Does it Work?

This process can be set up manually through SQL queries. The first step is to create a database on a secondary server in standby/norecovery mode. To do this, you need to backup your primary database.

BACKUP DATABASE [PrimaryDatabase] TO DISK = N'c:\PrimaryDatabase.bak';

And restore the secondary server with the STANDBY or NORECOVERY option.

RESTORE DATABASE [SecondaryDatabase] FROM DISK = N'c:\PrimaryDatabase.bak' WITH STANDBY= 'c:\Program Files\Microsoft SQL Server\MSSQL15.MSSQLSERVER\MSSQL\DATA\SecondaryDatabase_rollback_undo.bak'

Please note that for the standby option you need to specify the path to a file that will contain pending secondary database transactions which can be used to roll back the operation. This file is as important as .ldf and .mdf files and cannot be deleted while the secondary database is in standby mode. After restoration on the secondary server, database will be in standby/read-only mode.

In order to sync databases, you need to create transaction log backup on primary database and execute restore on the secondary database. Transaction log backup is performed with BACKUP LOG command

BACKUP LOG [PrimaryDatabase] TO DISK= 'c:\PrimaryDatabase_log.bak';

To perform a transaction log backup, the primary database must have a full recovery model set.

RESTORE LOG command will help you restore the created file:

RESTORE LOG [PrimaryDatabase] FROM DISK = N'c:\PrimaryDatabase_log.bak’ WITH STANDBY = N'I:\Program Files\Microsoft SQL Server\MSSQL15.MSSQLSERVER\MSSQL\Backup\SecondaryDatabase_rollback_undo.bak'

Transaction log backups contain only changed data since the last transaction log or full backup. Their creation and recovery processes are fast, do not load databases and are small in size.

Each time a RESTORE LOG operation is performed, the secondary database will be synchronized with the primary database at the time of BACKUP LOG operation.

How Do You Automate the Process?

Although it is not hard to perform backup and restore manually, creating a script that automates this process can be a tricky task.

A good log shipping automation solution should provide not only automated backup and restore, but also delivery of backups to the secondary server, following correct recovery sequence and sending failure notifications.

We will review two tools for log shipping automation below.

SQLBackupAndFTP

SQLBackupAndFTP is a popular utility for performing backups. It has a log shipping job, which allows you to automate the log shipping process between any two servers.

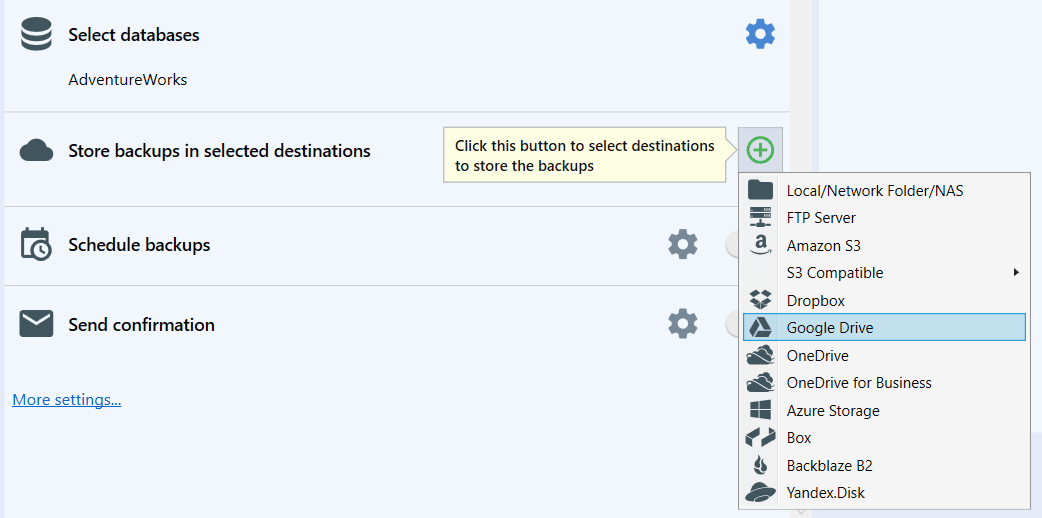

It also doesn’t require a direct connection between primary and secondary servers. All you need is buffer storage for transferring files, which can be any of the following: local/network folder, FTP/SFTP, any S3, Azure Storage, DropBox, Google Drive, OneDrive/OneDrive For Business, Backblaze B2, or Yandex.Disk.

Download SQLBackupAndFTP from the official website.

To organize the process, you need to create a backup job on a primary server and a log shipping job on a secondary server.

Backup Job

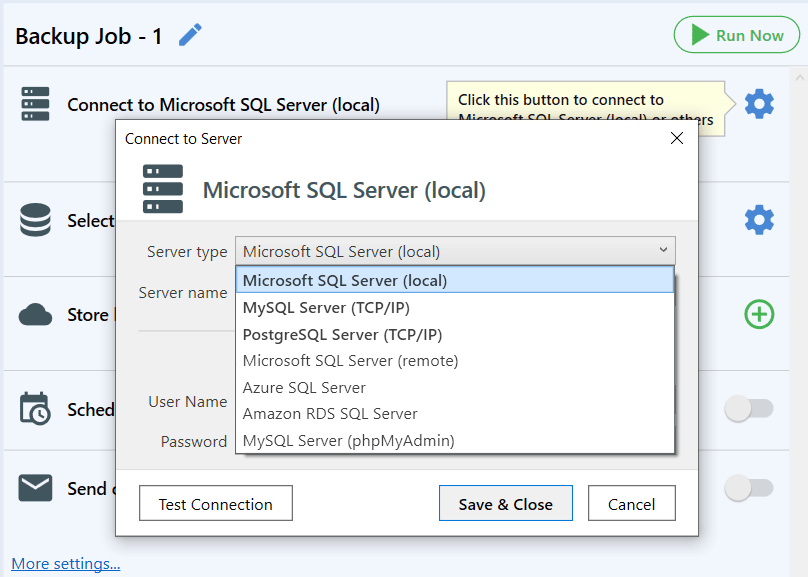

The first step after installing the app on the primary server is to configure a connection to the SQL Server. In the window that appears, configure connection details.

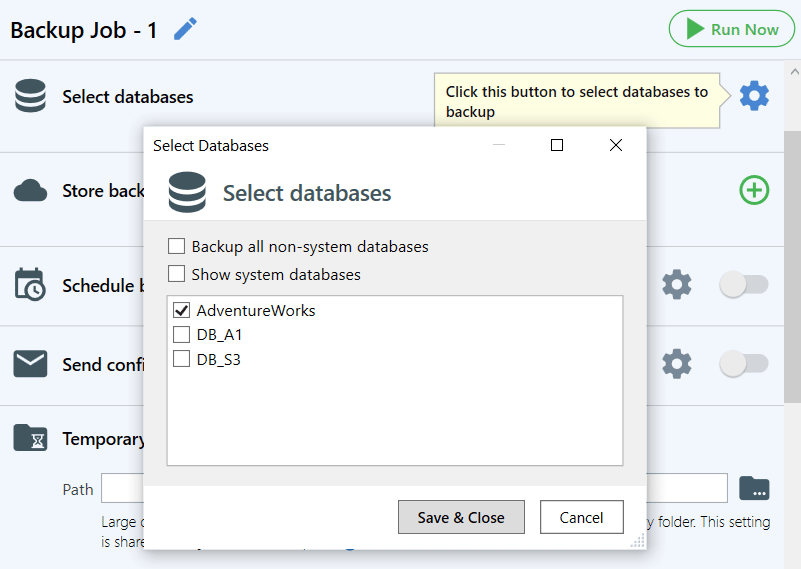

Next, select databases that should be backed up and restored to the secondary server.

You will also need to choose a location where the backups should be stored.

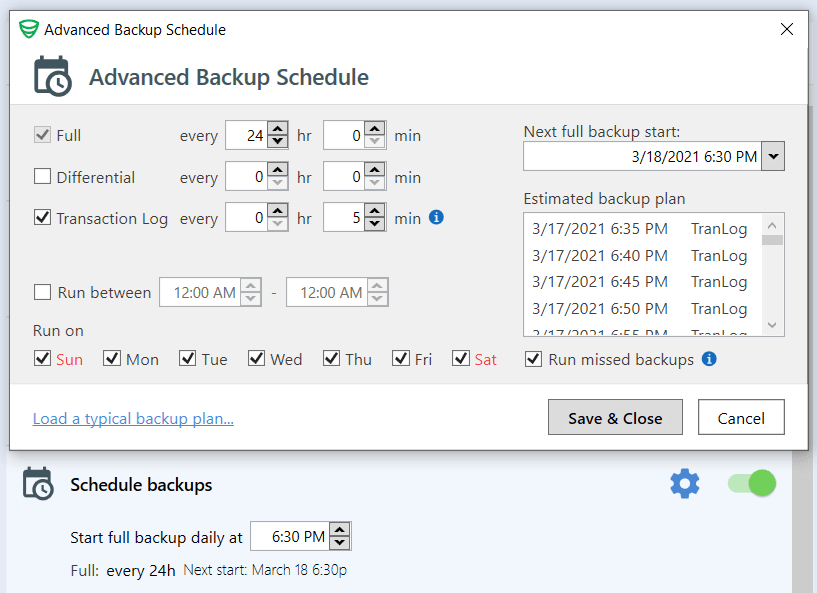

The last step is to schedule regular backups.

This completes the backup job setup. You can also enable email notifications, encryption and other useful options that are available in SQLBackupAndFTP.

Now you need to start the job to make sure everything is set up. After that, you can proceed to creating a Restore Job.

Restore Job

First, you need to install SQLBackupAndFTP on the secondary server.

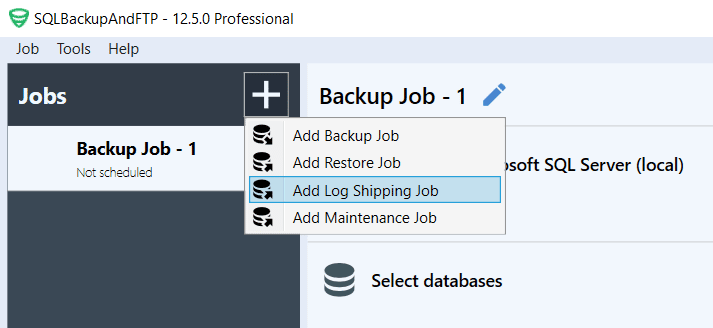

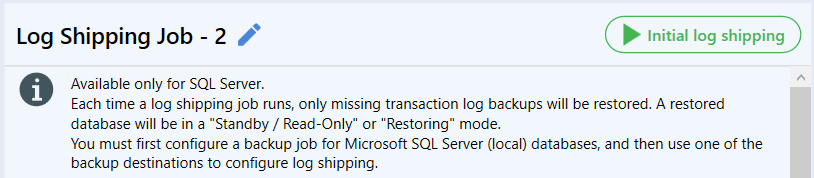

Then, create a restore job. To do this, click on the plus button and select “Log Shipping Job.”

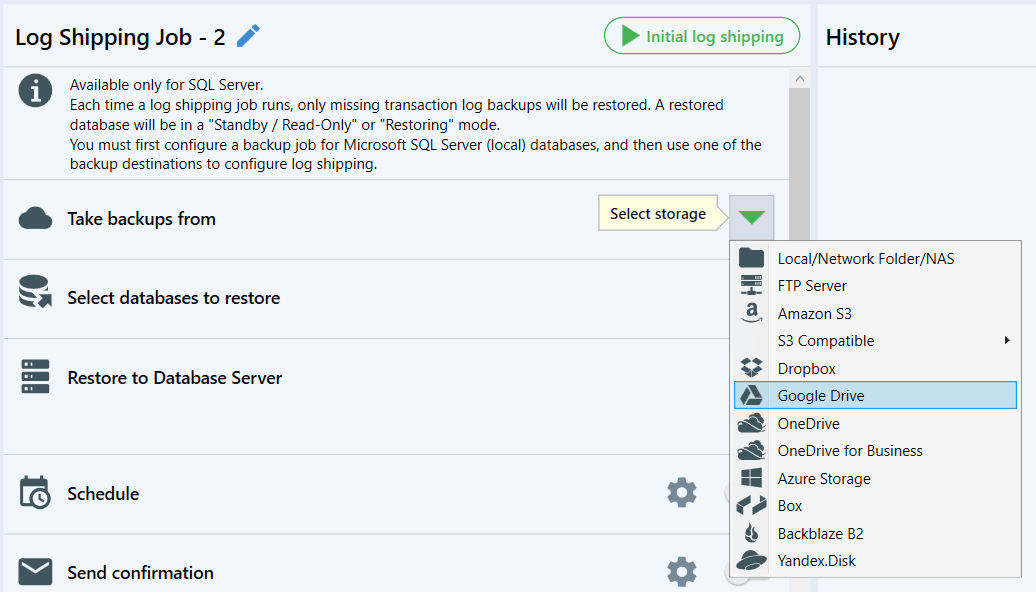

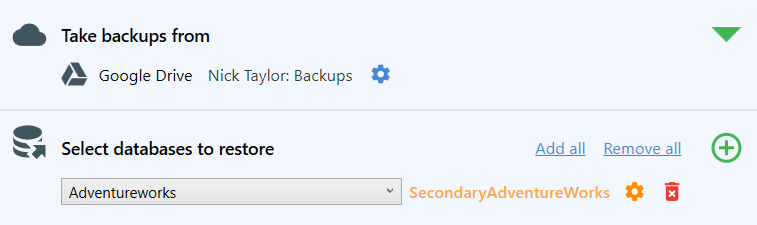

After that select a storage for backups.

Once you select storage, it will be scanned and you can select databases.

Click on the gear to change the name of the database.

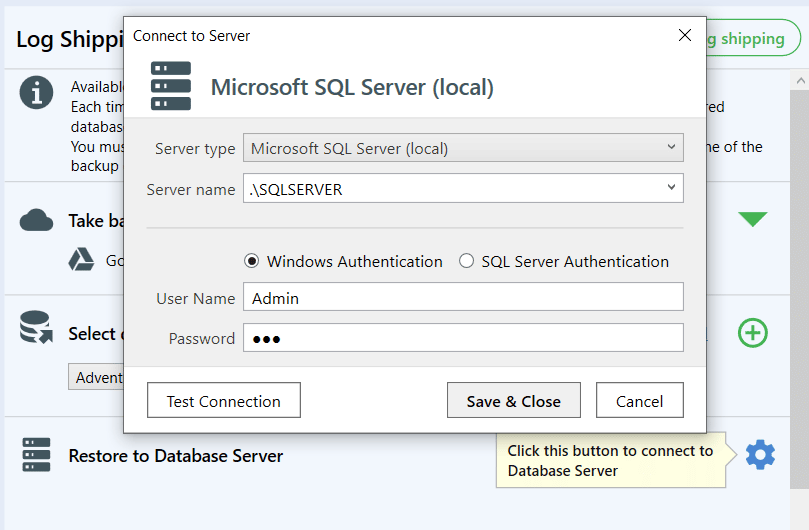

Now, you need to add a connection to a secondary DBMS.

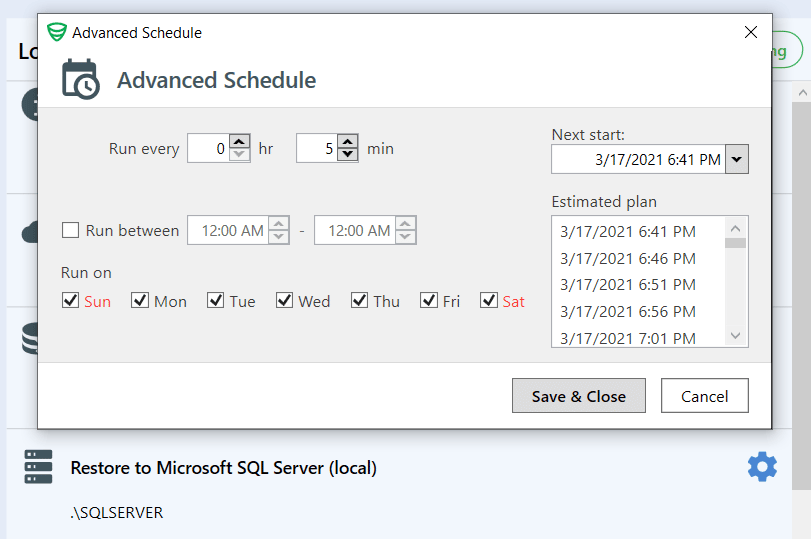

The last step is to schedule regular execution. This can be done in the schedule section.

For more complex settings, click on the gear.

As in the backup job, you can set up email notifications about failures and archive decryptions.

After that, you need to run the job by pressing the initial log shipping button. The first-time job needs to be started manually.

The job will create a database on the secondary server in read-only mode.

Log Shipping Can be Configured via SQL Server Management Studio

The following things are required to set up log shipping:

- Two standalone SQL Servers

- An available shared folder for both servers

Preparation

- Recovery model

Log shipping can only be performed for databases with the Full or Bulk-Logged recovery model. To set the Full recovery model, execute the following SQL query:

ALTER DATABASE [AdventureWorks] SET RECOVERY FULL WITH NO_WAIT

- Permissions

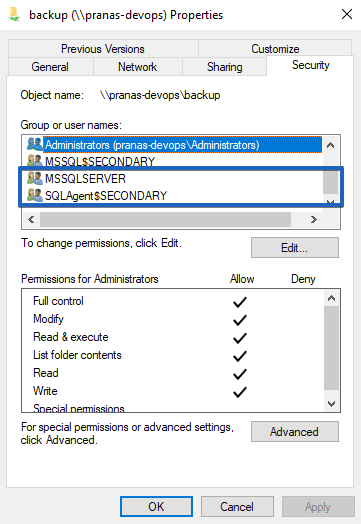

It is crucial to provide read/write permissions for a shared folder for the following users MSSQLSERVER and SQLAgent on both primary and secondary servers.

- Configure SQL Server Agent Launch

By default, the SQL Server Agent service is set to a manual startup type. It is necessary to change it to an automatic. To do this, please follow these steps:

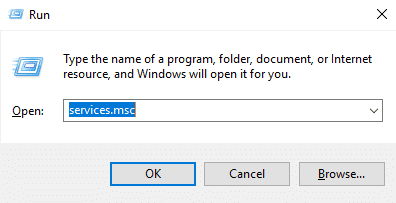

- Press “Windows+R” and enter services.msc

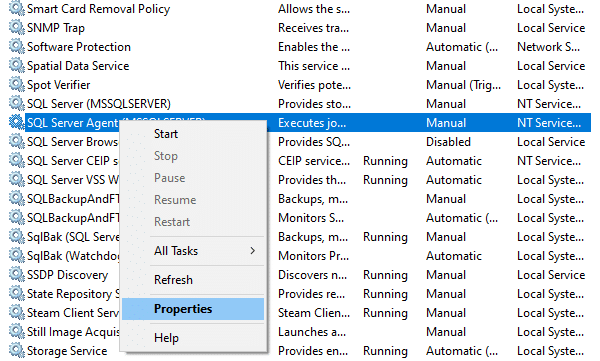

- Find SQL Server Agent in the list, right-click and select “Properties”

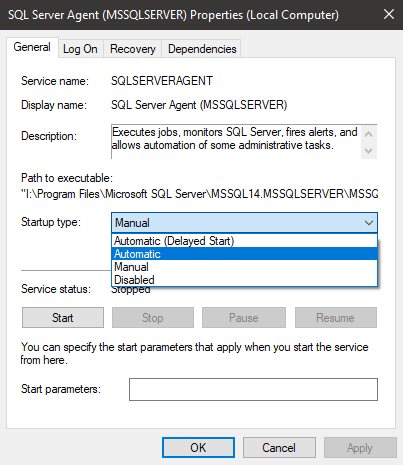

- Set “Startup Type” to “Automatic” and click “OK” to save the settings.

Log Shipping Step-by-Step Setup

Below is a step-by-step guide on how to set up log shipping between the primary and secondary servers.

- Open SSMS and connect to the primary SQL Server

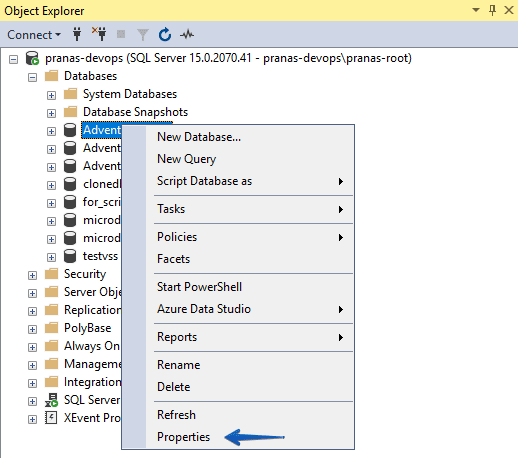

- Right-click on the database in the “Object Explorer” section in SSMS and select “Properties”

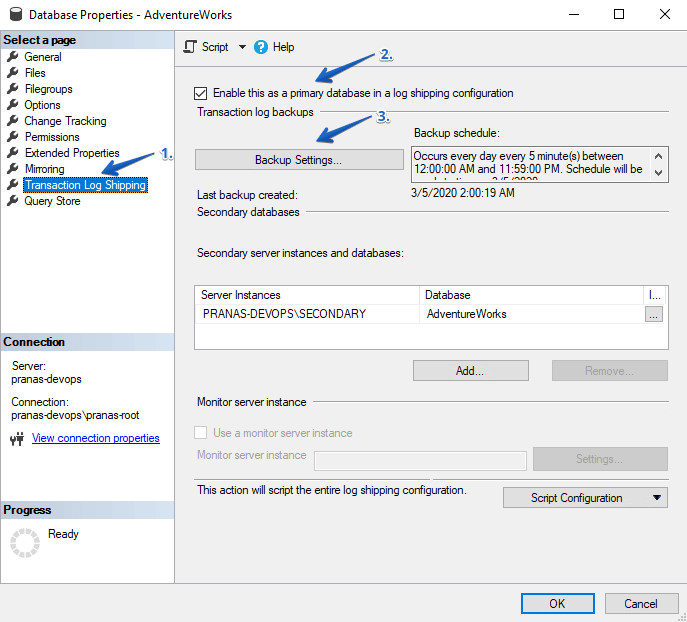

- Select “Transaction Log Shipping,” activate the checkbox “Enable this as a primary database in a log shipping configuration” and click “Backup Settings…”

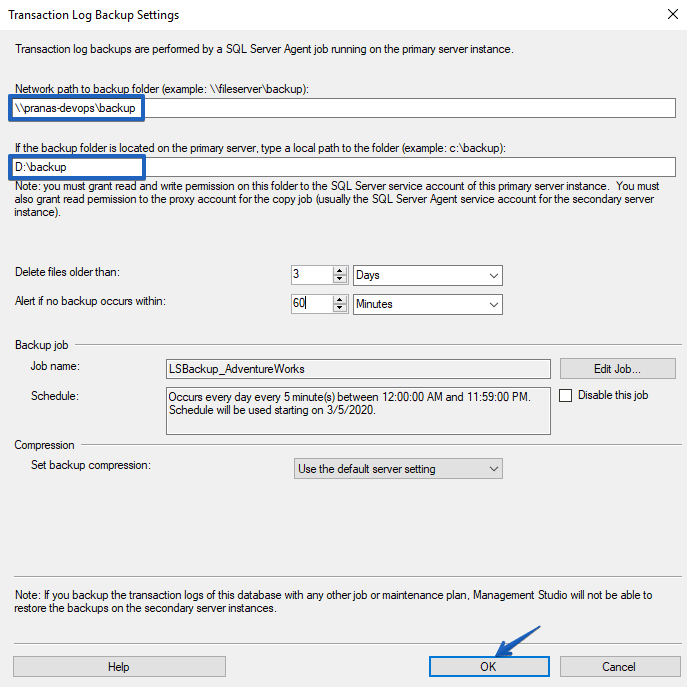

- Specify a shared folder to transfer files between servers. If the folder is located on the primary server, then it is recommended to specify the local path to that folder. When it is set, click “OK.”

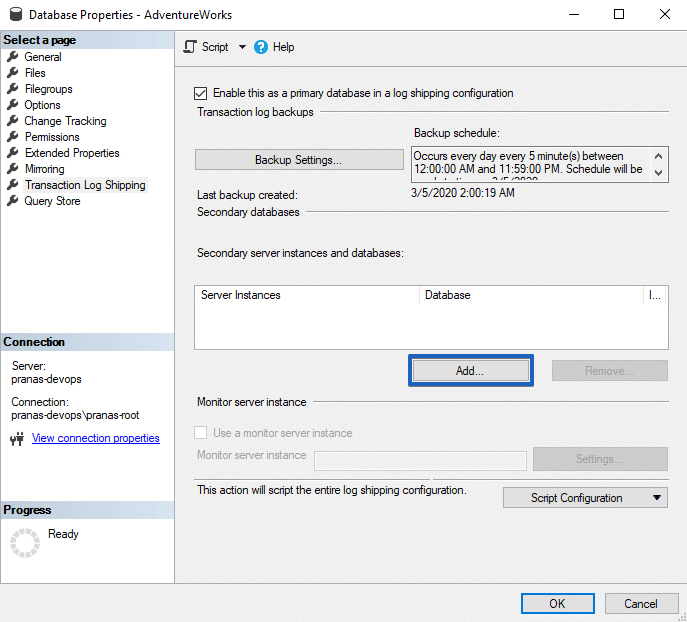

- Now add the secondary server by clicking on the “Add…” button.

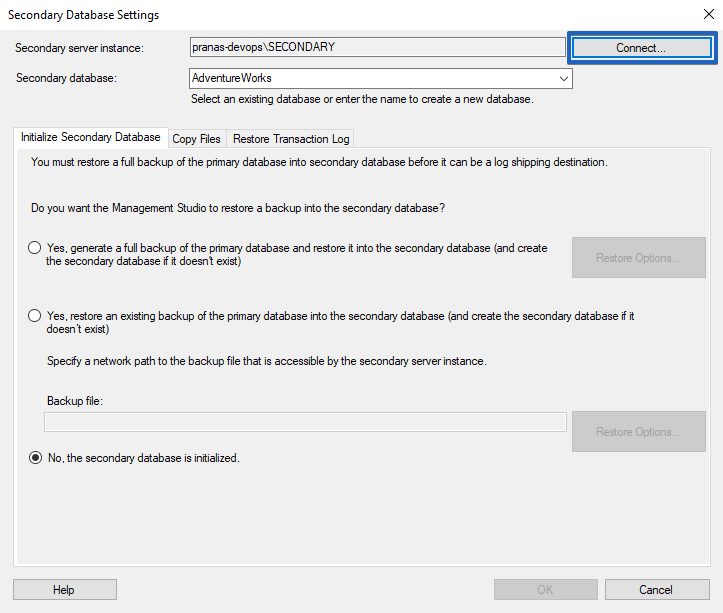

- Add a connection to the secondary server by clicking on the “Connect…” button.

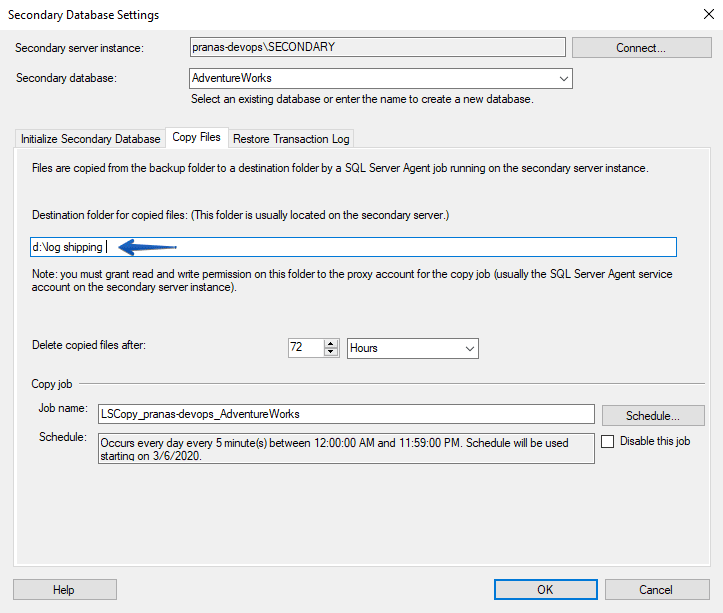

- On the “Copy Files” tab, specify the local directory on the secondary server to store log backups. SQL Agent on the secondary server will copy backup files from the shared folder into this directory.

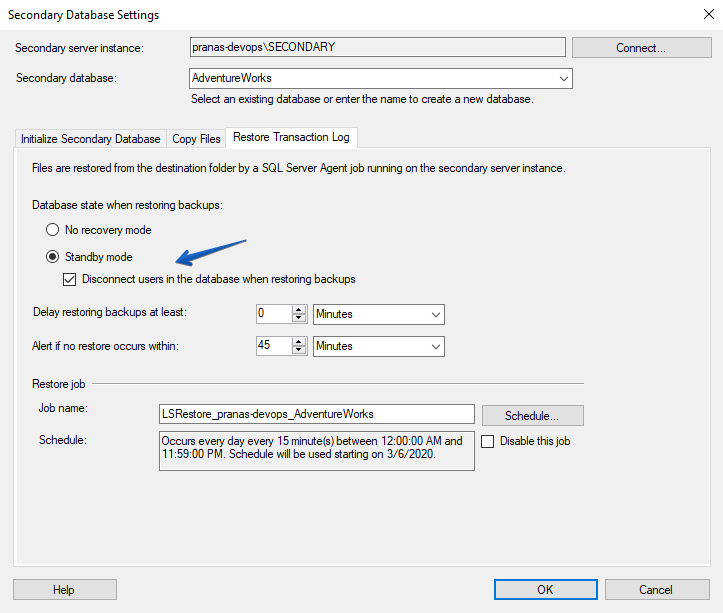

- On the “Restore Transaction Log” tab, select “Stand By” mode and “Disconnect users in the database when restoring backups.”

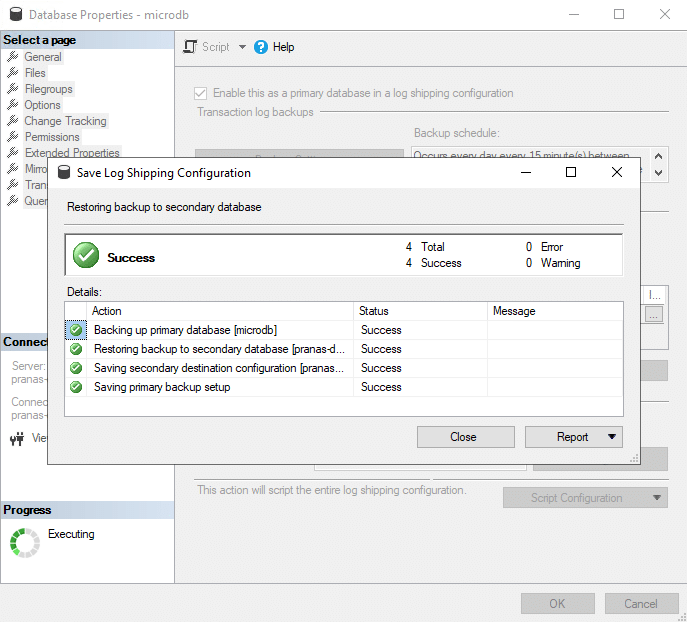

- By clicking on the “OK” button, the process of creation of a database on the secondary server starts. If everything is successful, the following window appears:

Settings Verification

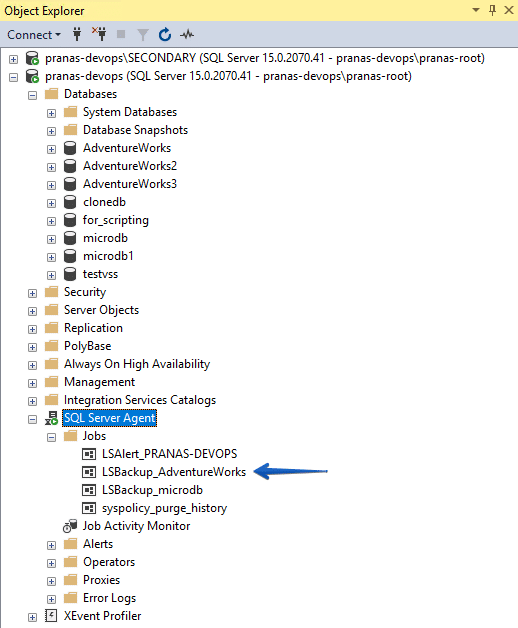

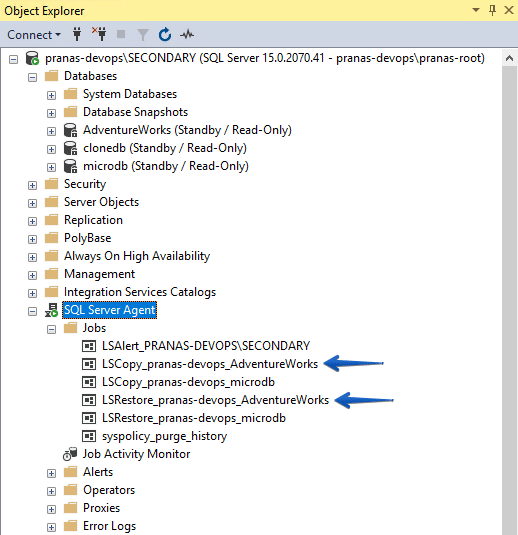

After Log Shipping activation on the primary server in “Object Explorer” -> “SQL Server Agent” -> “Jobs” a backup job should appear.

And on the secondary server, a job should appear to copy the log file and restore it.

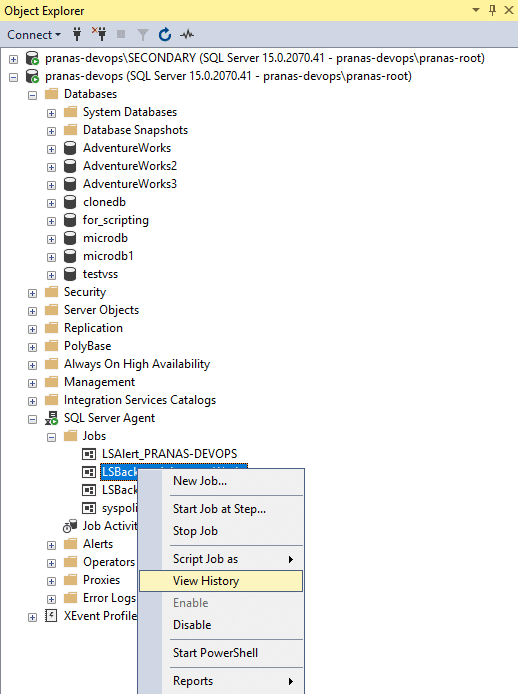

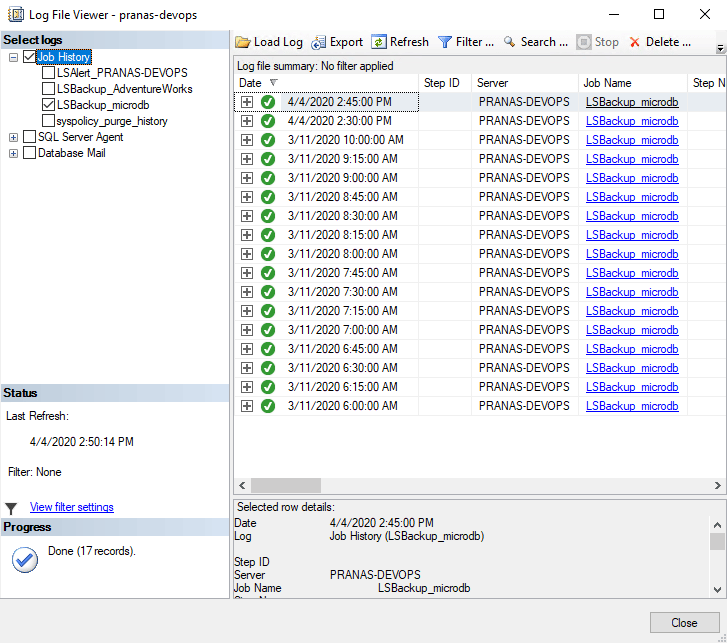

To track the actual use of transaction logs, click on the “View History” on the restore job.

Here, the history of the latest restored logs will be available.

If the needed records are absent, then check the backup and copy job for errors.

4 thoughts on “Scheduled Restore. How to Set Up Log Shipping”

Hi, When we setup log-shipping via tool, would the users will be disconnected directly when a cycle kicks in. I have a requirement not to disconnect existing connections and if possible postpone the log-shipping. Please let me know if this can be handled via tool. Thanks

Hello Anil Vemula,

Unfortunately, currently, SQL Server forcefully terminates all connections to the database during log shipping. Regrettably, this issue cannot be resolved through SQLBackupAndFTP either. However, thank you for your feature request; we have forwarded it to our development team for consideration.

Hello, I have the pro license and I’m interested in making backups using log shipping. Can I configure them to be restored to the secondary server automatically from sqlbackupftp?

Hi! Thanks for your question.

Yes — SQLBackupAndFTP includes a built-in Log Shipping Job feature that enables automated transaction log backups on the primary server and their scheduled restoration on a secondary server.

You can learn more about how to configure it in our documentation:

👉 https://sqlbackupandftp.com/docs/docs/job-settings/log-shipping-job/